Not Enough Tokens, Not Enough Money: An AI Optimist's Crisis of Faith

Apr 23, 2026, 10:00 AM

I've been sitting with a contradictory feeling lately, and I want to write it down.

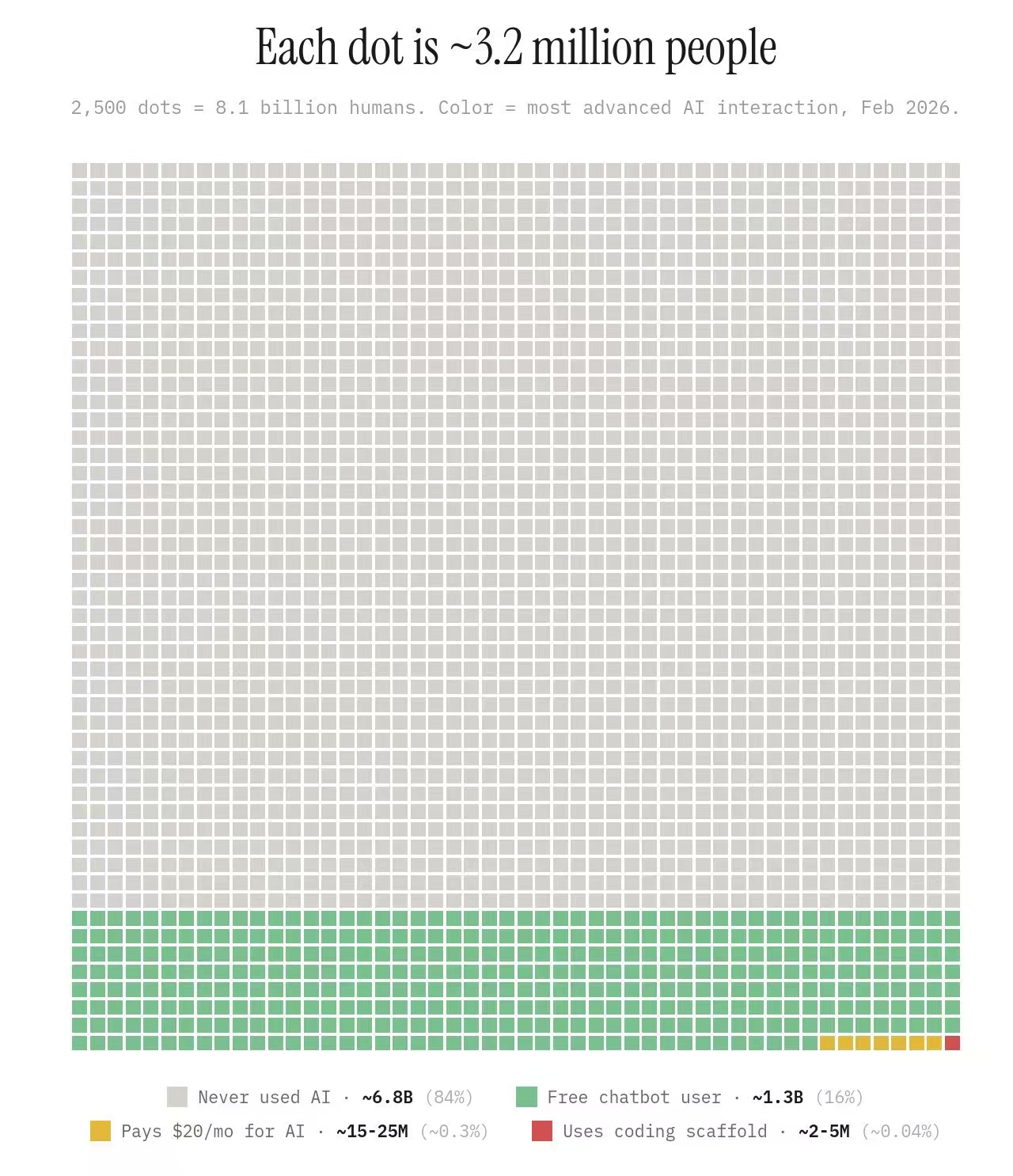

Token consumption is skyrocketing, yet AI is nowhere near mainstream. Look at this chart:

Each dot represents 3.2 million people. The gray ones are people who have never used AI -- 84%, 6.8 billion people. Green dots are free-tier users -- 16%. Paid users? 0.3%. People using coding scaffolds? 0.04%, fewer than 5 million worldwide. You and I are most likely somewhere in that barely visible red dot.

And yet, we're already running out of compute. What's more, the U.S. and China are running out in different ways -- China lacks chips, America lacks electricity. One is being strangled by export controls, the other by the laws of physics. Demand is still at the base of the mountain, and supply is already hitting its ceiling?

But then again, I think current token consumption is extraordinarily wasteful.

A huge share of tokens is spent making AI adapt to legacy systems that humans built up over decades. Parsing PDFs, reading Word documents, processing Excel files, interpreting formats designed for printers and human eyes. It's like making a superintelligent mind communicate with you through a fax machine.

Here's a take that might be controversial: in the long run, PDFs, Word documents -- these things shouldn't exist. They are relics of the printer era, deeply hostile to AI. The future should be agent-first, with structured data underneath and what humans see as just a rendering layer generated on demand. (In fact, I already manage my own resume this way -- JSON stores the data, LaTeX compiles it to PDF, the entire pipeline maintained by an AI agent. All I have to say is "update my CV.")

Claude's addendum to this was more precise than mine: it's not agent-friendly, but agent-first, human-readable. Because accountability still falls on people -- contracts need human signatures, audits need human eyes. So formats won't disappear, but they'll shift from being "containers of information" to being "rendering layers for information." I think that's right.

Once the entire ecosystem becomes more AI-native, per-token efficiency will improve dramatically. But here's the thing -- efficiency gains have never reduced total consumption. Economists call it Jevons Paradox: when steam engines got more efficient, coal consumption went up, not down. When tokens get cheaper, people will simply use more of them. So compute may never be enough.

At this point, the logical conclusion should be an optimistic one: demand is infinite, the future is bright, go all in on AI.

But I can't convince myself of that.

GPU debt is piling up. Nearly all of U.S. GDP growth is coming from AI infrastructure. OpenAI just raised at an $850 billion valuation with $122 billion in a single round. Every company is telling an AI story, every VC is funding AI, every country is building data centers. The scene looks all too familiar -- the last time I saw something like this was 1999.

If you told me there's no bubble here, I wouldn't believe you.

When I talked through this contradiction with Claude, it offered me a framework from the economist Carlota Perez and her theory of technological revolutions: every major technology goes through an "installation phase" and a "deployment phase." During installation, capital floods in, infrastructure gets overbuilt, and bubbles inflate. Then comes the crash. And then, riding on all that "wasted" infrastructure, the technology enters its true era of mass adoption.

The internet followed exactly this arc. The fiber-optic cables laid in 1999 -- the bubble burst, most companies died, but the cables remained. Google, Amazon, and Facebook grew up on top of those fibers. The bubble isn't the opposite of prosperity -- the bubble is the installation cost of prosperity.

The explanation is logically elegant. Today's GPU clusters, data centers, and power grid expansions are this era's "undersea cables." In the short term it looks like a bubble; in the long term it's a necessary episode of overinvestment. Because if everyone were rational, no one would build the road before the traffic arrives.

But knowing this framework doesn't ease my anxiety. Because you never know whether you're standing in 1997, 1999, or 2003. When you're inside it, you can never tell when the installation phase ends, when the crash begins, or when the deployment phase arrives.

So my contradiction remains: I believe AI will change everything, and I also believe there is an enormous bubble. Both of these things are true at the same time. And history tells us that admitting "I don't know the timeline" may be the only honest position.